OAUTH 2.0

There are many scenarios where you need to call a service that implements OAUTH 2.0. It has support for roles and claims for instance. Out of the box support for OAUTH 1.0 is really easy and there are many walkthrus on this topic. I will show how to configure a Logic App to use OAUTH 2.0.

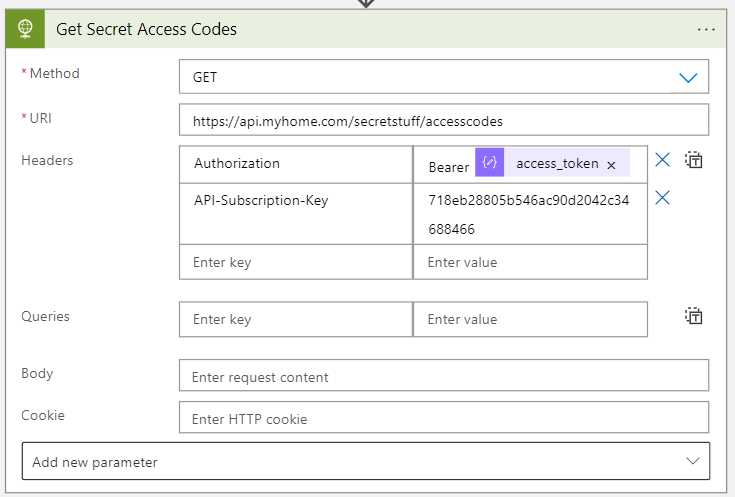

This is also related to my earlier post Getting a bearer token from AAD using Logic Apps where I show you how to get a OAUTH 2.0 token using Logic Apps.

The scenario

Someone has setup a service that handles sensitive data. Simply protecting it using an API key is considered too low a level of security. The provider has setup an Application Registration in Azure AD and provided you with a ClientID, Client Secret and the Scope. All are needed to authenticate.

How this is setup in AAD is out of scope in this post.

The solution

We decide to use Logic Apps and an HTTP connector. It has built in support for OAUTH 1.0 but we are going to use 2.0.

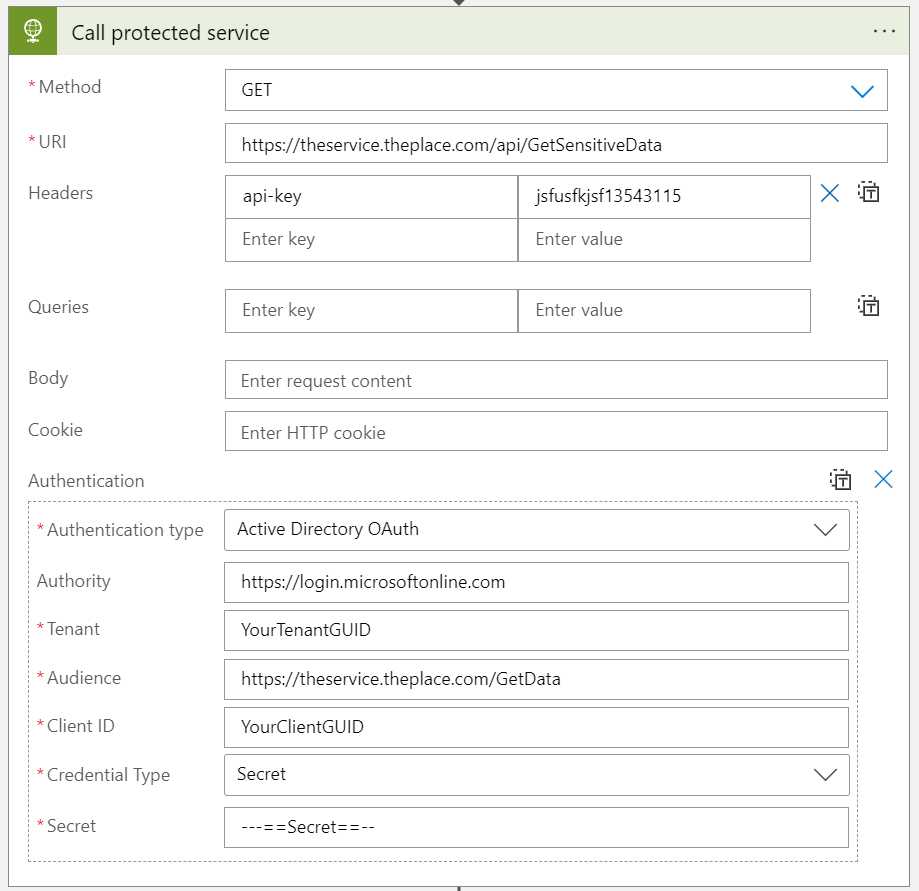

Here is a mock-up of the settings, lets go thru them.

- URI: The URI of the service you need to call.

- Header: api-key: You usually need to provide an API key when calling an API. This setting is specific to the service you need to call.

- Authentication Type: Choose Active Directory OAuth

- Authority: Set to

https://login.microsoftonline.comwhen using Azure AD - Tenant: Your Azure AD

TenantID - Audicence: Provide the

Scopeyou have been sent. Make sure to omit the/.defaultat the end of the scope string, if present. - Client ID: The

Client IDyou have been sent. - Client Secret: The

Client Secretyou have been sent.

That is actually it!

Some notes

Scope

The strange and hard part for me was finding how to configure the Scope. First off you put the Scope as Audience, which feels strange. Then you must provide the base Scope. This was different for me.

When you use Postman to get an OAUTH2.0 token you send the scope with a /.default at the end of it to say “give me claims for the default scope”. When I set the property like that I got an error. You need to remove the suffix.

Authority

This hard to find in the documentation, but the setting makes sense. If you are using standard Azure (not US military, China or Germany) this is always set to https://login.microsoftonline.com. You can find the other settings here.